Robots can deliver food on college campuses and hit a hole in one on the golf course, but even the most advanced robots cannot perform the basic social interactions of everyday human life.

MIT researchers have now incorporated certain social interactions into the framework of robots, allowing them to understand what it means for peers to help or hinder each other and learn to perform those social behaviors themselves. In a simulated environment, the robot would watch its companion, guess what task it wanted to accomplish, and then combine its own tasks to help or hinder the companion.

The researchers say their model creates achievable and predictable social interactions. When they showed videos of these robots simulating human interactions to a human audience, the audience generally agreed with the social interactions taking place in the models.

Giving robots social skills could lead to smoother, more positive human-machine interactions. For example, robots in assisted living facilities could use their social skills to provide more care for the elderly. The new models could also allow scientists to quantitatively measure social interactions, helping psychologists study autism or analyze the effects of antidepressants.

"Robots will soon be living in a human world for a long time. They need to learn how to communicate with us in a human way. They need to know when to help. This is very basic work, and we're just scratching the surface, but I feel like this is the first serious attempt to better understand what it means for humans and robots to interact socially." Researcher Boris Katz.

Social environment simulation

The researchers created a simulated environment in which the robots moved through a two-dimensional grid, pursuing set physical and social goals.

The physical target is related to the environment, perhaps a tree that navigates to a point on the grid, for example. The social goal is to guess what the other robot is trying to do and then take action based on that guess, such as helping the other robot water a tree.

The researchers used the model to specify what the robot's physical goals were, what its social goals were, and how much emphasis it placed on both. The model includes an algorithm that determines which actions the robot should take, such as being rewarded for taking actions close to its goal, to guide the robot toward both physical and social goals.

"We opened up a new mathematical framework for simulating social interactions between two subjects. If you're a robot and you want to go to position X, and I'm another robot and I see that you want to go to position X, I can help you get to position X faster. It could be getting X closer to you, finding another better X, or taking whatever action you have to take to get to X." Tejwani said.

The researchers used this mathematical framework to define three types of robots. Level 0 robots have only physical goals and cannot engage in social reasoning. Level 1 robots have physical and social goals, and assuming other robots have only physical goals, level 1 robots can help or hinder other robots in achieving their physical goals. Level 2 robots and other machines have physical and social goals, and they can take more complex actions, such as helping other robots achieve both physical and social goals.

Human Audience assessment

To compare the model with human social interactions, the researchers created 98 different scenarios, had 12 people watch video clips of 196 robot interactions, and then asked them to name the robot's physical and social goals. For the most part, the human audience agrees on the social interactions in the model.

"We wanted to look at these videos for characteristics of human understanding of social interaction. We might do a test that identifies social interactions, provides a way to guide people through social interactions, and thus enhance their social skills. We're still a long way from that, but even just effectively measuring social interactions is a big step forward." Barbu said.

A more mature

The researchers are working to develop a model with 3D systems that allow for more types of interactions within an environment. They also plan to add a neural network-based planner to the model that can learn from lessons and execute instructions quickly. In addition, they want to conduct an experiment to collect data on the characteristics of human recognition of robot social interactions.

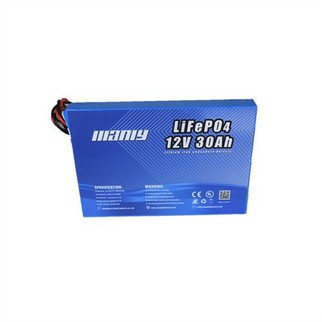

About Manly Battery

Located in Shenzhen,China. A leading Lithium batteries over 12 years ,widly used for Robostic ,if there is any project need to evluate ,pls feel free to send email to info@manlybatteries.com